Don’t feel like reading? Listen to the audio version.

Introduction

For the past several years, AI has captured the attention of many industries, including finance and banking, where it’s been helping institutions to automate many important services and solutions. In the meantime, the European Union has taken a significant step forward with the introduction of the EU AI Act.

What exactly is the EU AI Act, and why does it matter? In this article, we'll introduce the basics to provide you with a comprehensive understanding before we explore its implications in more detail and check the AI readiness of the banking sector.

56% of users would welcome the help of AI in their financial recommendation services while 48% see it as a tool for evaluating credit or loan applications.

Arizent survey

What is the EU AI Act?

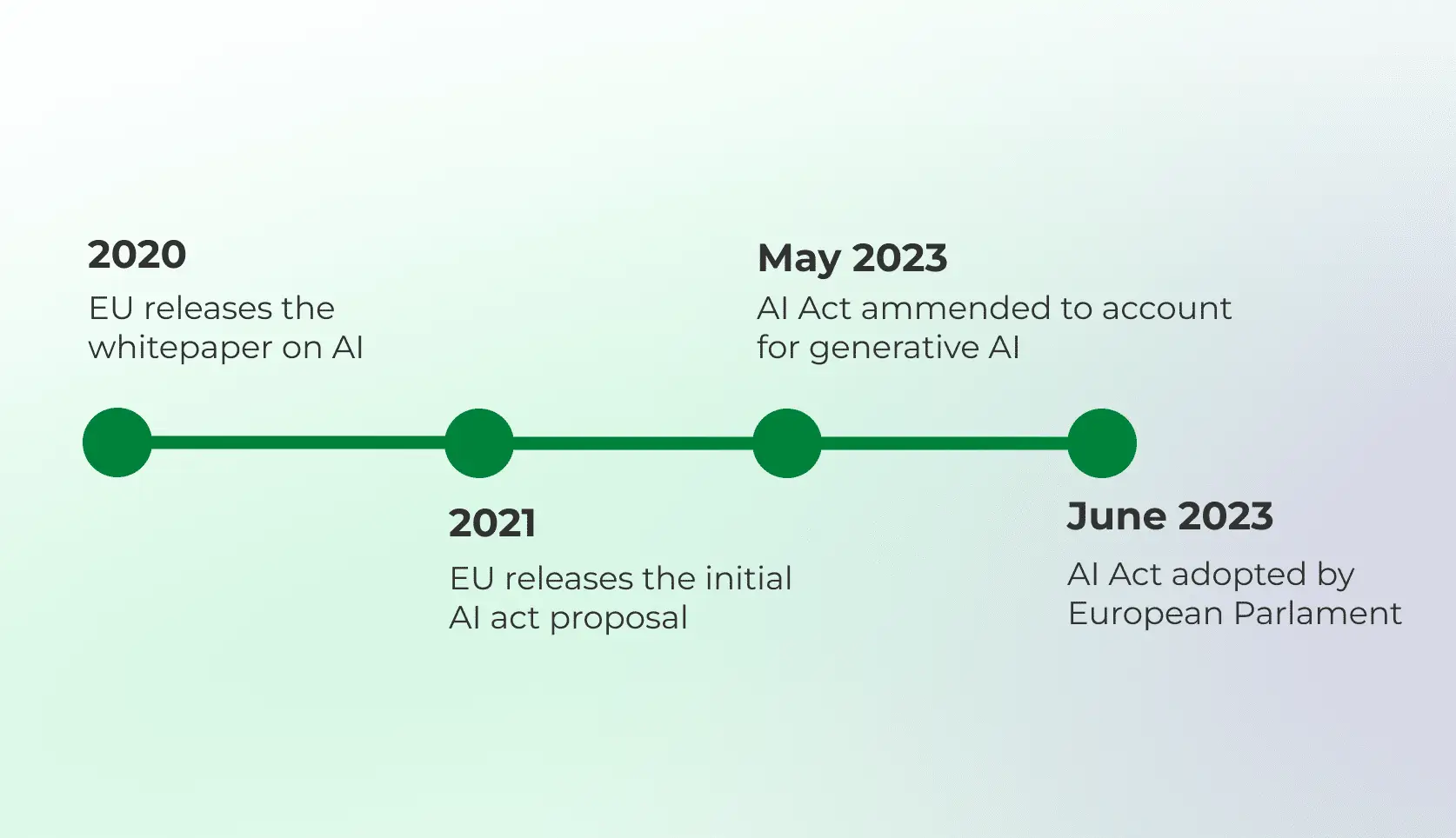

The EU AI Act is a main legislative initiative aimed at regulating AI systems within the European Union. Introduced by the European Commission, it represents a proactive approach to ensure AI technologies are developed and used in a manner that aligns with the EU's values and regulatory standards. It is expected to come into force in May of 2024 with at least two-year transition period for a complete implementation.

Besides regulating the AI systems to fit the safety standards, AI Act aims to protect users from “bad data”. A primary concern revolves around the potential for misinformation perpetuated by AI algorithms. Especially with the emergence ofAI-generated content and tools processing it, this introduces the notion of"hallucinations" - data or information wrongly perceived and used by the AI systems. The AI Act should safeguard this potential risk with requirements for transparency and accountability.

How each AI platform or system is treated is based on “horizontal approach”, which follows a very simple rule – the higher the risk within a certain AI system, the stricter regulations apply to it. Following this rule the AI Act is divided into roughly three main risk levels:

Unacceptable: The highest risk level, which will be immediately prohibited, includes AI systems that were or will be created and maintained with a purpose of manipulation, exploitation, or social scoring. Most of the remote biometric identification systems are also falling under this category.

High risk: AI systems of this category usually include credit scoring models for banks, management and operation of critical infrastructure, education, essential private services, law enforcement or legal interpretation and application of the law. These systems have to be assessed before entering the market and also monitored throughout their life cycle.

Limited risk: The group most prominent in the digital banking sector, including systems that detect fraud or enhance customer experience with tools like customer service chatbots, spending analysis or personal finance management. Generative AI, such as ChatGPT, is also considered low risk and will comply with transparency requirements and EU copyright law.

Non-compliance with unacceptable AI practices or government requirements will lead to fines of up to €30 million or 6%of the total global annual turnover, up to €20 million or 4% of the turnover for high-risk systems, and up to €10 million or 2% of the turnover for limited-risk systems.

The Act seeks to establish clear rules to ensure the safety and trustworthiness of AI systems from both EU and non-EU providers. This includes measures to mitigate risks associated with AI, such as data privacy breaches, discrimination, and lack of transparency.

Hot tip: Garbage-in/garbage-out explained: Learn how AI chatbots depend on high-quality data

How banks use AI

Chatbots and AI assistants: Automating customer support and usingAI as a personal financial advisor

Personalised services: Creating tailored financial advice and personalised customizable experiences within banking environment

Fraud detection: Analyzing transaction patterns and identifying anomalies that may indicate fraudulent activities

Credit scoring: Using AI algorithms to accurately assess customer behavior and creditworthiness

Automation: Utilizing process automation to improve back-office productivity and minimizing workload

How does the AI Act affect the banking sector and what to do?

System Assessment: The Act emphasizes the importance of mitigating risks where AI decisions impact individuals' access to financial products. Banks and financial firms must examine their software and identify any AI systems used in their digital banking or automation processes. Those can be subjected to the AI Act and must be reviewed and filed into one of the risk categories.

Requirements Compliance: Financial institutions must ensure their AI systems comply with the Act's requirements for transparency and accountability. This is especially important for hight-risk systems requiring the highest level of compliance and monitoring. AI systems must provide users with clear information about their capabilities, limitations, and potential impact.

Data Governance: Banks must establish a good internal framework for AI systems used within the company. Effective data governance is crucial here with strict management of data quality, privacy, and security to safeguard sensitive customer information and comply with regulatory standards such as GDPR.

Oversight and Adaptation: Specialized stuff must be educated and briefed with all AI Act requirements. In certain cases, the Act requires human oversight of AI systems to ensure decisions are fair, unbiased, and in line with legal and ethical standards. With constant regulatory updates, it is essential to follow the current state of AIAct and adjust accordingly.

Conclusion

The EU AI Act represents a significant milestone in the regulation of artificial intelligence within the European Union. By setting clear rules and standards, it aims to promote the responsible development and deployment of AI technologies while safeguarding fundamental rights and values.

In our upcoming webinar, we will explore the EU AI Act in more detail. You will learn why the AI Act matters for banks, how it defines high-risk AI systems or how AI Act compliance fits into your bank’s risk management strategy.