The timing of transaction enrichment determines which digital banking features a bank can realistically deliver. Getting this architectural decision right is not a backend concern - it is a product strategy decision.

In this article, we will compare both real-time data enrichment, batch enrichment, and put them in the right place for banks to use.

Why timing matters in real-time data enrichment

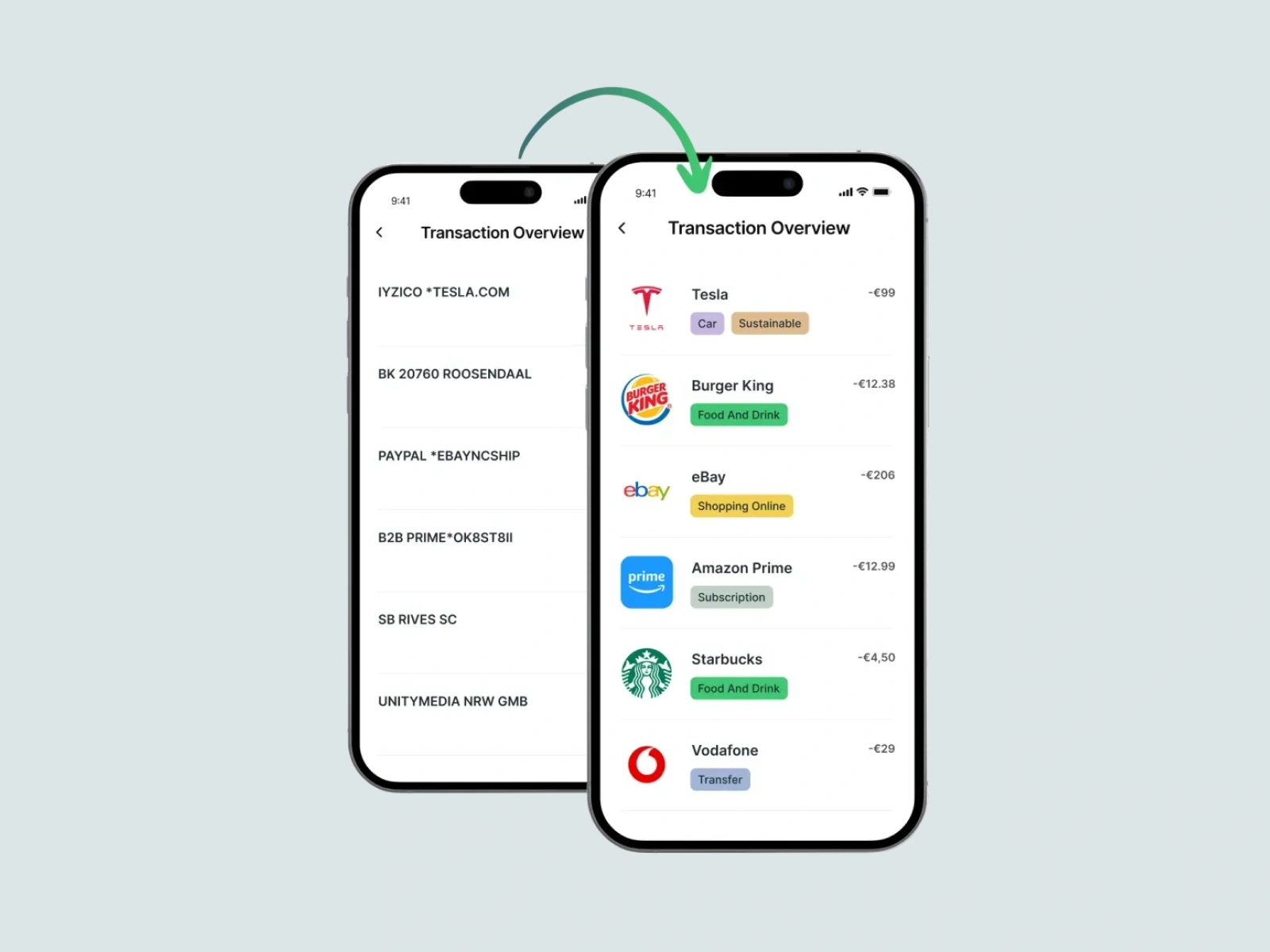

Transaction enrichment is often introduced as a cosmetic upgrade - replacing "B2B PRIME*OK8ST8II5" with a clean merchant name in the mobile feed. It might tell you that you are paying for Amazon Prime subscription, but this framing also underestimates what enrichment actually does, and it leads to poor architectural decisions about where and when it should run.

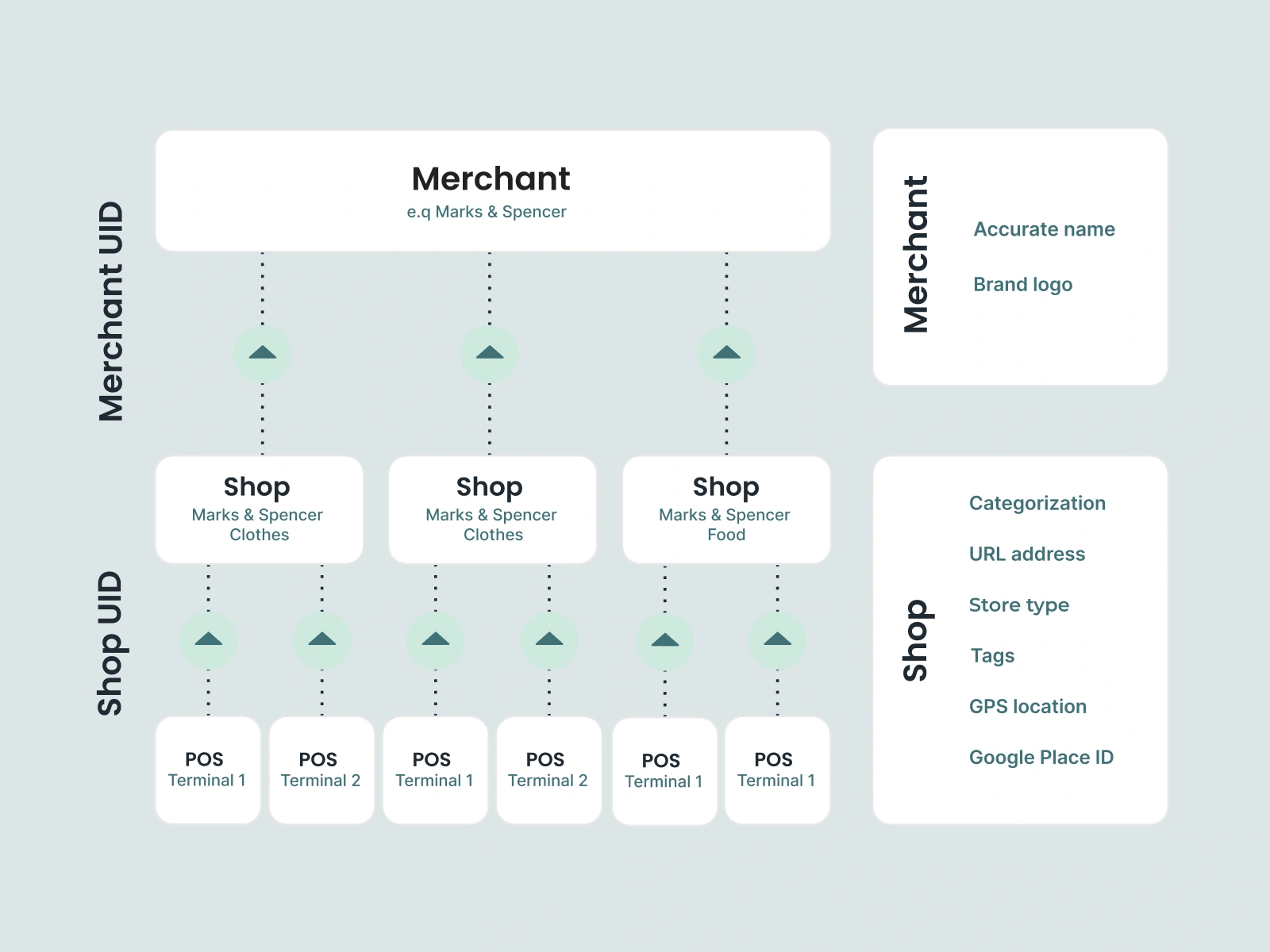

The real purpose of enrichment is to produce structured merchant data that can be consumed reliably across multiple systems: the notification engine, the search index, the PFM layer, the fraud review pipeline, and the analytics warehouse. When enrichment is positioned correctly, all of these systems inherit clean, consistent data.

Want to know more? Learn about data quality in digital banking.

This means the first question a bank or fintech should ask is not "should we enrich?" - it is "which product features do we need, and when do those features need structured data?"

Batch enrichment: strengths, limitations, and where it belongs

Batch enrichment processes transactions in groups - typically overnight or on a periodic schedule. Raw transaction records are written to a data store; a job runs against them, and the enriched output is written back. For banks with established data warehouses and analytics workflows, this is often the default pattern.

The strengths of batch enrichment are simple. It is operationally straightforward, imposes no latency constraints on the transaction processing path, is cost-efficient at scale, and integrates naturally into existing data pipelines. The data quality needs for these workflows are high, but the timing requirement is not.

Where batch enrichment performs well

- Spending analytics and BI dashboards: Overnight latency is acceptable; accuracy matters more than speed.

- Regulatory and compliance reporting: Reports are submitted on a fixed schedule, so there is no need to process transactions the moment they happen.

- Historical data correction: When banks migrate customers or clean up old records, batch jobs can re-process large volumes of past transactions without touching the live pipeline.

- High-volume, low priority processing: For transactions that nobody is waiting on, batch is cheaper and simpler to run at scale.

The core limitation of batch enrichment is timing. A transaction processed at 14:32 will not carry a clean merchant name until the next enrichment job runs - often hours later. For any feature that needs to act on transaction data immediately, that delay is a functional blocker.

Real-time enrichment: what it enables and what it requires

Real-time enrichment runs during transaction ingestion - before the transaction record is written to any downstream system. The raw transaction data is passed to an enrichment service as part of the processing pipeline, the enriched record (with clean merchant name, category, logo, and metadata) is returned within milliseconds, and that structured record is what gets stored and distributed.

The architectural consequence is significant: every downstream consumer receives an already-enriched record. There is no dependency on a later job. There is no need to handle unenriched states. The transaction feed becomes trustworthy from the moment a transaction occurs.

Product features that require real-time enrichment

- Push notifications: A notification that fires seconds after a transaction must carry a clean merchant name. A notification showing a raw terminal string damages trust and increases support volume.

- Transaction search: Search indexes built on raw data return unreliable results. Customers searching for a specific merchant will miss transactions if the index contains the original acquirer string.

- PFM and budgeting: Accurate categorisation at the point of transaction eliminates the need for retroactive corrections. Real-time enrichment means budgets update correctly as spending happens.

- Subscription detection: Identifying recurring charges requires pattern matching against structured merchant data. Batch enrichment introduces a window during which recurring transactions may be missed or misclassified.

- Fraud and dispute review: Analysts reviewing flagged transactions need context immediately. Enriched merchant data - including location, category, and brand - reduces investigation time and false positive rates.

Real-time enrichment does require investment. It introduces a synchronous dependency in the transaction pipeline, which means latency and availability must be managed carefully. The enrichment service must meet SLA requirements consistent with card processing expectations - typically sub-100ms latency and very high availability. For banks evaluating API-based enrichment providers, these are the primary technical requirements to validate.

Learn how to select the right data enrichment provider for your business.

Getting the decision right: pipeline position and hybrid models

The most consequential error in enrichment architecture is not choosing the wrong model - it is placing enrichment in the wrong position in the pipeline. Many banks discover this problem after the fact: enrichment is running, but it is running after the notification layer and after the search indexer. The data gets cleaned, but too late to benefit from the features that need it.

Most banks that have deployed enrichment at scale use a hybrid model: real-time enrichment at ingestion for all customer-facing transaction data, and batch processing for analytics pipelines, reporting, and historical remediation. These approaches serve different consumers with different timing requirements.

In a hybrid model, the real-time enrichment API sits in the transaction ingestion path and enriches each transaction before it is written. The same enriched data is then consumed by the analytics pipeline, which may apply additional categorisation, aggregation, or modelling on a periodic schedule. The key is that analytics consumes already-enriched data - it does not depend on batch enrichment to produce clean records that should already exist.

For banks that want to avoid building this infrastructure from scratch, Tapix is a ready-made enrichment API built for exactly this purpose. It runs in real time at ingestion, returning clean merchant names, logos, categories, and location data within 3 milliseconds. It also supports batch processing for historical data and invalidations API that corrects records when merchant data changes over time.

The decision between real-time and batch enrichment is, ultimately, a decision about product ambition.